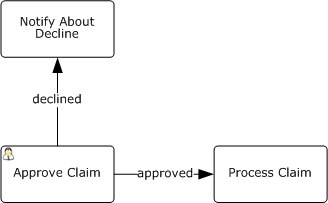

Unfortunately, the question “how to model human decisions in BPMN” isn’t frequently asked.

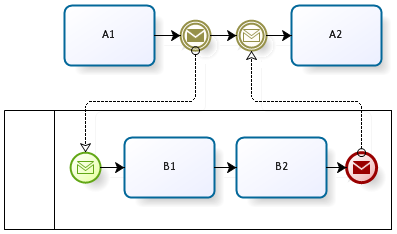

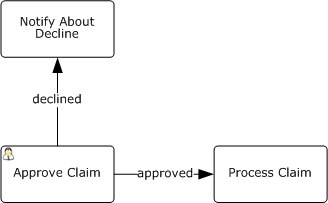

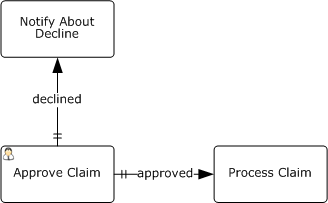

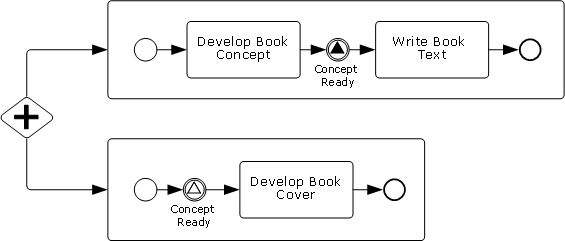

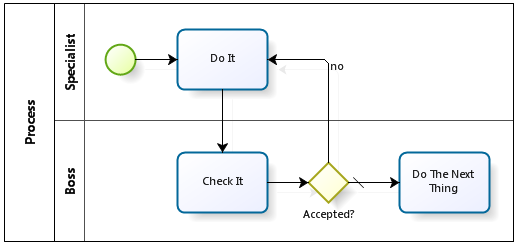

“Unfortunately” because the intuitive answer is wrong. This is not a fork but a parallel execution:

After exiting the “Approve Claim” task the process will continue in parallel on the outgoing flows whatever is written on them.

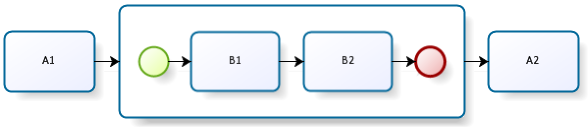

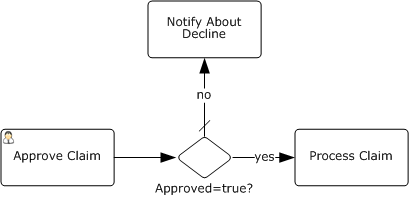

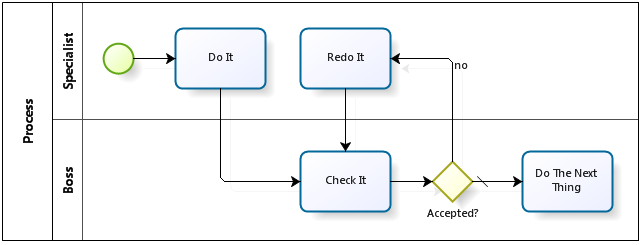

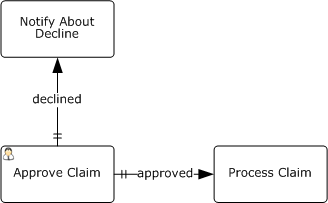

Valid BPMN diagram looks like this:

It’s implied that the process has a boolean attribute “Approved”. User sets this attribute at the “Approve Claim” task, the gateway checks its value and the process continues in one of the flows.

As you can see, BPMN authors didn’t provide a special construct for human decisions but implemented them rather artificially: a special attribute that must be set by a human and checked in the gateway immediately after.

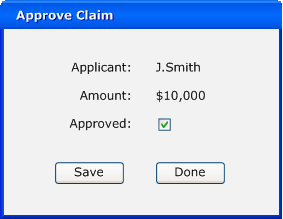

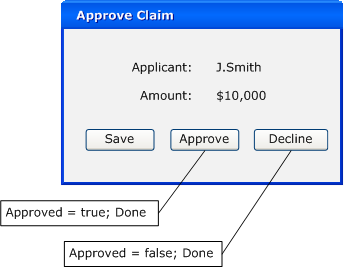

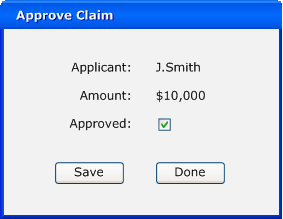

The user interface for the task where the decision is made may look like this:

When “Done” button form is pressed the task is completed.

I agree with Keith Swenson that BPMN misses explicit support of human routings.

Firstly, human-based and automatic routings look alike at a diagram. Yet this is an important aspect of the process.

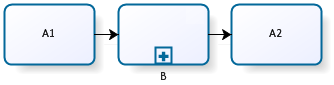

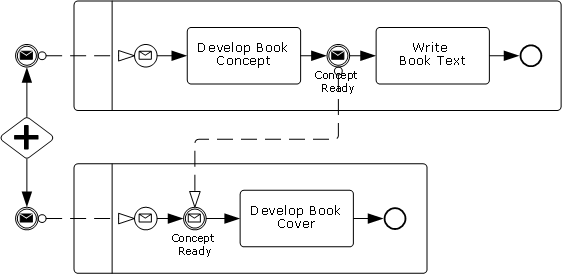

If it was my decision I’d introduce explicit support of human routing into BPMN. Since first diagram above is actually more intuitive than valid BPMN, I’d leverage on it:

The existing flow types - Control Flow, Conditional Flow and Uncontrolled Flow - are extended by Human Controlled Flow here, marked with a double dash.

Another issue are screen forms like the one above which provoke user mistakes: it’s tempting to press “Done” and get rid of the task without paying attention to the attributes.

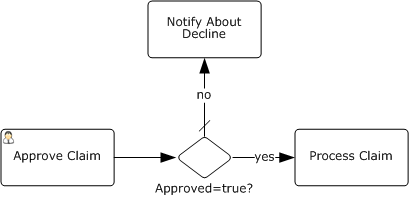

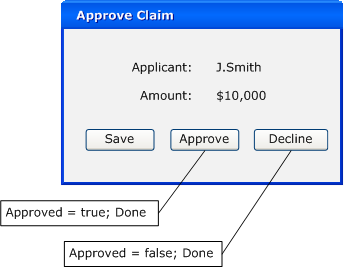

If a decision is requested from a human then the form should look like this:

The buttons could be generated automatically from the process diagram above.

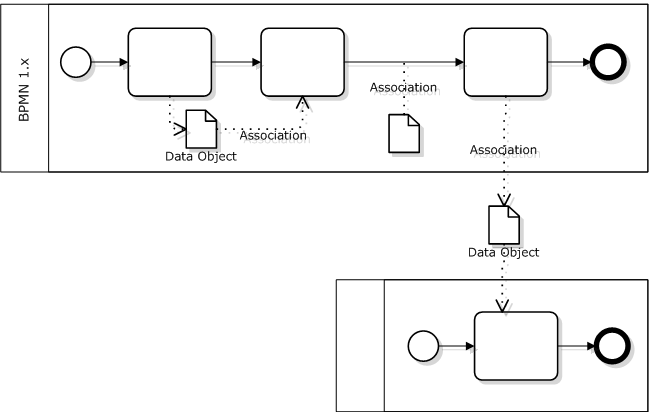

Yet it’s possible to utilize this technique for standard BPMN, too:

“Done” button is replaced by “Approve” and “Deny” here, each of them being bound to two actions: set the attribute value and complete the task.

Now I’m going to use this occasion to appeal to BPMS vendors: please give the opportunity to create more than one button completing the task and bind them to attributes. If you didn’t do it yet, of course.